Preamble: if you want the code and don't care for my ramblings here you go -

http://git.sysphere.org/freebsd-pkgsign/

Coming from GNU/Linux where gpg-agent was available to

facilitate key management when signing repositories or packages I

missed that feature. FreeBSD however uses SSL not GPG. But those keys

can be read by the ssh-agent and we can work with

that. Recent SolarWinds supply chain attack is a good reminder to

safeguard your software delivery pipeline.

If you announced PUBKEY repositories to your users or

customers up until this point you would have to switch to

FINGERPRINTS instead, in order to utilize the

pkg-repo(8) support for an external

signing_command.

The Python Paramiko library makes communication with an agent

simple and it is readily available as the py37-paramiko

package (or port) so I went with that. There was however a small

setback (with RSA sign flags) but more about that at the bottom of the

article. If you would prefer a simpler implementation of the agent

protocol and to have a self sufficient tool I found sshovel to be

pretty good (and confirmed signing is implemented well enough to work

for this purpose). I didn't have time to strip out (now unnecessary)

encryption code, and more importantly didn't have time to port

sshovel to python3 (as python2 is deprecated in FreeBSD).

We are all used to digests of public keys serving as fingerprints and

identifiers. However Paramiko derives fingerprints from the public key

in the SSH format. For simplicity I decided to flow with it and

reference keys by Paramiko fingerprints. The "--dump"

argument is implemented as a helper in pkgsign to list

Paramiko fingerprints of all keys found in ssh-agent. But

before we dump fingerprints if your key(s) is on the file-system

without a passphrase (which they really shouldn't be) it's time to put

a passphrase on them now (and don't forget to shred the old

ones). Here's a crash course on ssh-agent operation, and how

to get pkgsign to connect to it:

$ ssh-agent -t 1200s >~/.ssh/ssh-agent.info $ source ~/.ssh/ssh-agent.info $ ssh-add myprivatekey.key.enc Enter passphrase: [PASSPHRASE] $ ./pkgsign --dump INFO: found ssh-agent key [FINGERPRINT]If you wanted to automate key loading through some associative array etc. It would be beneficial to rename your private key to match the fingerprint. But you don't have to. However for the public key it is expected (unless you change the default behavior). This is because converting the public key obtained directly from the agent to the PEM pkcs8 format (that pkg-repo(8) is expecting in return) would be more code than this entire thing. It is much simpler to just read the public key from the file-system and be done with it.

# ln -s /usr/local/etc/ssl/public/mypublickey.pub /usr/local/etc/ssl/public/[FINGERPRINT].pubThe ownership/permissions/chflags scheme on the encrypted private key and parent directories is up to you. Or plug it in on external media, or cryptokey, or scp it only when needed, or shutdown the signing server after signing... This is crucial. Agent availability is an improvement, but don't get complacent because of it.

#!/usr/bin/env bash

declare -A REPO_KEYS

REPO_KEYS['xfce']=FINGERPRINT11111111111111111111

REPO_KEYS['gnome']=FINGERPRINT22222222222222222222

# /path/to/repos/xfce/FreeBSD:12:amd64/

ARG=$1

SOFTWARE_DISTRIB="${ARG%/*/}"

SOFTWARE_DISTRIB="${SOFTWARE_DISTRIB##/*/}"

SOFTWARE_DISTRIB_KEY="${REPO_KEYS[$SOFTWARE_DISTRIB]}"

/usr/sbin/pkg repo $ARG signing_command: ssh signing-server /path/to/pkgsign ${SOFTWARE_DISTRIB_KEY}

How to bootstrap your users or convert existing ones to the new

repository format is explained in the manual very well but let's go

over it anyway. Since the command to generate the fingerprint may look

intimidating to users you could instead opt to pregenerate it and

host it along side the public key:

# mkdir -p /usr/local/etc/pkg/keys

# mkdir -p /usr/local/etc/pkg/fingerprints/YOURORG/trusted

# mkdir -p /usr/local/etc/pkg/fingerprints/YOURORG/revoked

# fetch -o "/usr/local/etc/pkg/keys/YOURORG.pub" https://www2.you.com./YOURORG.pub

# sh -c '( echo "function: sha256"; echo "fingerprint: $(sha256 -q /usr/local/etc/pkg/keys/YOURORG.pub)"; ) \

>/usr/local/etc/pkg/fingerprints/YOURORG/trusted/fingerprint'

# emacs /usr/local/etc/pkg/repos/YOURORG.conf

...

#signature_type: "PUBKEY",

#pubkey: "/usr/local/etc/pkg/keys/YOURORG.pub",

signature_type: "FINGERPRINTS",

fingerprints: "/usr/local/etc/pkg/fingerprints/YOURORG",

...

If you want to evaluate pkgsign with OpenSSL pkeyutl

first to confirm all of this is possible you can do so for example

like this (but only after patching Paramiko as explained in the

paragraph below this one):

$ echo -n "Hello" | \ openssl dgst -sign myprivatekey.key.enc -sha256 -binary >signature-cmp $ echo Hello | \ ./pkgsign --debug [FINGERPRINT] >/dev/null $ echo -n "Hello" | \ openssl sha256 -binary | openssl pkeyutl -verify -sigfile signature-Hello \ -pubin -inkey mypublickey.pub -pkeyopt digest:sha256 Signature Verified SuccessfullyNow for the bad news. To make this project happen I had to patch Paramiko to add support for RSA sign flags. I submitted the patch upstream but haven't heard anything back yet. It would be nice of them to accept it, but if it takes a very long time then luckily the changes are very minor. It is trivial to keep moving it forward in a py37-paramiko port.

--- paramiko/agent.py 2021-01-15 23:03:50.387801224 +0100

+++ paramiko/agent.py 2021-01-15 23:04:34.667800388 +0100

@@ -407,12 +407,12 @@

def get_name(self):

return self.name

- def sign_ssh_data(self, data):

+ def sign_ssh_data(self, data, flags=0):

msg = Message()

msg.add_byte(cSSH2_AGENTC_SIGN_REQUEST)

msg.add_string(self.blob)

msg.add_string(data)

- msg.add_int(0)

+ msg.add_int(flags)

ptype, result = self.agent._send_message(msg)

if ptype != SSH2_AGENT_SIGN_RESPONSE:

raise SSHException("key cannot be used for signing")

A few years ago, sitting in an emergency room, I realized I'm not

getting any younger and if I want to enjoy some highly physical

outdoor activities for grownups these are the very best years I have

left to go and do them. Instead of aggravating my RSI with further

repetitive motions on the weekends (i.e. trying to learn how to suck

less at programming) I mostly wrench on an old BMW coupe and drive it

to the mountains (documenting that journey, and the discovery of

German engineering failures, was best left to social media and

enthusiast forums).

Around the same time I switched jobs, and the most interesting stuff I

encounter that I could write about I can't really write about, because

it would disclose too much about our infrastructure. If you are

interested in HAProxy for the enterprise you can follow development on

the official blog.

Internet, in 2018, was not a safe place.

By this I don’t mean spam arriving in our inbox, viruses or malware lurking in software downloaded from less-reputable places, or phishing sites masquerading as our favorite e-commerce platforms.

These risks are real, but well understood and widely recognized. However, in the past years there has been an increasing evidence for, and occurrence of, completely different kinds of risk that most of us online are exposed to.

Examples of these are pervasive tracking of behavior online, appropriation of personal data by the apps or sites we use, data breaches, and junk media optimized to maximize engagement.

Before I go over each of these in more detail, a disclaimer: I don’t think everyone’s out to get me, or that big corporations such as Google or Facebook are inherently evil. I do think that companies, big and small, are incentivized to behave in ways that create or increase these risks. That is, the default is to behave in a way that makes things worse.

Start with tracking. Google and Facebook know every page you visit if it has Facebook or Google login, social or like buttons, embeds fonts or maps, uses Google Analytics or any of their dozens of APIs. So do the ad networks: a handful of major ones are used on most sites, and they track unique users so they can build your profile, optimize ad inventory that you see and retarget you. This means they follow you around the internet to show you ads for products you viewed but haven’t bought yet.

Google and Facebook, the portals to the online world for many, know the most about us. But they are not unique in this regard: companies such as Twitter, Amazon and virtually everyone else does it as well.

Is this really a problem? I believe so. I personally don’t like my privacy being violated at will by a random site I happen to visit. On a practical level, I understand that the companies collecting this data aim to maximize their shareholders’ value, not my benefit. While some amount of tracking is acceptable to improve the service I get — and people may have different notion of what’s acceptable to them — there should be a way to draw the line somewhere instead of going full-in.

Tracking can be countered by using an ad blocker, such as uBlock Origin or AdBlock Plus. Today’s ad blockers do more than just block annoying ads: they also disrupt all kinds of invasive tracking, and can be integrated in all modern browsers and mobile devices. This approach does have a side-effect of blocking ads too, depriving sites of revenue. However, at this point I don’t think browsing the web is at all viable without an ad blocker. To put it bluntly, the experience is horrible.

I also use DuckDuckGo, an alternative search engine with a focus on privacy and usability. Its results are usually slightly worse than Google’s, but it does have a few extra tricks up its sleeve (such as direct searching of a specific sites) and it’s easy to fall back to Google, so it’s tradeoff I’m willing to make. DuckDuckGo also has a browser extension which can also block tracking software and report site’s privacy score, among other things.

Finally, I use Firefox with Multi-Account Containers and First-Party Isolation features enabled. These are “block 3rd-party cookies” option on steroids, completely isolating each site so no cross-site tracking is possible. The side effect is disrupting features such as log in via Google or Facebook, comments or likes, and site widgets from 3rd party sites. Equipped with a good password manager (I use 1Password), I find this only mildly annoying.

On mobile, I use Firefox Focus, which behaves like a browser in incognito mode, making it easy to forget all history (including any tracking cookies) with a single tap.

The amount of information big internet giants track about us is dwarfed by the amount of data we freely give them: photos, videos, text posts, travel and purchase information, our plans, intentions, fears and desires. And for the most part, they can keep this data forever, use it as they like, including giving others access to it. This has been somewhat limited by the European GDPR and the series of privacy scandals involving Facebook intentionally and unintentionally giving others vast amounts of what should’ve been private data. But it is still largely in place for those not inclined to, or not aware that they have the option to, micro-manage what rights over their data they give Facebook and other big companies.

The problem here lies in not seeing through the implications of this. When you tell Facebook (or Google, …) something, it remembers it forever. For instance, that embarrassing photo or status update you hope everyone’s forgotten by now. That awkward private message that you sent as public instead. That photo of you six months old naked in a bathtub that your parents thought was infinitely cute and just had to share publicly at the time.

All of this will be used, to sell you stuff or to make you come back for more. If you get embarrassed, mobbed, fired or worse — hey, you shouldn’t have posted it online.

Which brings me to the best way to minimize this risk: treat everything you post as if you’ve shouted it on prime-time national TV. If you wouldn’t be comfortable letting the world know about it, don’t put it online.

The only exception to this is email and private messages. Data breaches notwithstanding, these usually come with privacy implied and companies take care to protect these. But even here, it pays to be cautious because your conversation peers might not be.

Another way to ensure your privacy online is respected is to periodically — say, once a year — visit privacy and security settings of the sites you use and verify that all the settings are to your liking. These companies have an annoying habit of changing available privacy controls which then default to something the company finds useful, not what you might’ve wanted.

Massive data breaches, exposing passwords, social security numbers or other private and sensitive information of thousands or even millions of users, are nowadays a common occurrence.

While perfect security is impossible, the fact is that companies are not incentivized to strive for this perfection. One of the larger data breaches, that of up to 40 million credit and debit card details of Target in 2013, cost the company $202 million in total. This is in comparison with $2.4 billion net income for the company in 2017.

The largest data breach in 2018 was that of the Marriot Starwood customers' data, affecting anywhere between 300 and 500 million customers.

Laws like the European General Data Protection Regulation (GDPR) and California’s Consumer Privacy Act are slowly changing things for the better, but there’s still a long road ahead.

Individually, the best protection is following security best practices such as not using the same password on multiple sites, using HTTPS, enabling 2-factor authentication where avilable, using end-to-end encryption for private messaging, and so on. This decreases the problems you have when (not if) one of the sites you visit has a data breach.

I use the term “junk media” for content that’s primarily designed to get eyeballs, not provide useful information, be interesting or entertain. A few examples are textual and video content farms, social media feeds optimized for engagement or viral content, or irrelevant “breaking news”. Again, the line here is blurry and everyone will have differing criteria.

Why am I mentioning junk media in a post about staying safe online? Similar to over-sharing of our personal data, this is something we do to ourselves without really thinking about it. Accumulating over the longer term, it can also have negative consequences for us.

Junk media may be “fun” or “interesting” in the sense that we have an instant reaction, just like junk food can be tasty while containing poor nutritional value. In either case, indulging in moderation is not a problem, but a steady diet of either won’t be good for our health.

The problem is that moderation doesn’t maximize revenue. In purely commercial terms, the winning strategy for the media companies is to maximize views and engagement while minimizing churn. The more time we spend on those sites and the more content we consume, comment on or share, the better. The quality of time spent for the consumer is of secondary importance — just good enough to prevent people from leaving.

Junk media is not confined to online. It’s equally present in the press, on the TV and the radio. In the past, there’s been a lot said of negative effects of too much TV. Comparatively little research has been done into negative effects of too much social media.

Not consuming too much junk media is as easy — or as hard — as not overeating junk food: just don’t do it. A more actionable advice is putting it “out of reach” so you won’t unthinkingly reach for it. For example, I open Facebook from an incognito browser and have 2-factor authentication enabled. This forces me to go through multi-step login process each time I want to visit, making it inconvenient enough that I only visit if I really want to. For the same reason I also haven’t installed a Facebook app on my phone — it makes it too convenient to dive back in.

I’ve titled the post “Digital hygiene”. As with the regular form, digital hygiene consists of small things we can do every day that improve our health and minimize health risks.

Starting with the security best practices, thinking about what kind of information we’re sharing (willingly or not) with companies and the larger public and the possible implications down the road, we can change our behavior ever so slightly to minimize the downsides, while still reaping the benefits, of living online.

This post is my attempt to raise your awareness of some of these things, share a few practical tips, and give you some food for thought.

Ubuntu je afrička riječ koju opisuju kao "previše lijepu da bi ju preveli na engleski". Esencija te riječi glasi da je neka osoba potpuna samo uz pomoć drugih ljudi. Naglasak je na dijeljenju, dogovaranju i zajedništvu. Kao stvoreno za open source. Dosta ljubavi, gdje je tu Linux, čujem kako već negoduju nestrpljivi čangrizavci. :-) Wolfwood's Crowd blog 2004.

Ubuntu koristim od prve inačice, 4.10, Warty Warthog. Ispod haube je bio, i još uvijek je, Debian. Canonical je napravio fino podešavanje, dodali su sastojke koji su Debianu nedostajali. Uveli su točno definirali ritam izlaženja od kojeg su odustali samo jednom, na prvoj LTS inačici, 6.06, Dapper Drake. U prvim godinama besplatno su slali instalacijske CD-ove širom svijeta.

Za sve je to bio zaslužan Mark Shuttleworth koji je zaradio brdo novaca nakon što je Thawte prodao VeriSignu. Taj novac mu je omogućio da postane drugi svemirski turist, ali za tu avanturu je učio i pripremao cijelu godinu dana, uključujući i 7 mjeseci u Zvjezdanom Gradu u Rusiji.

Nakon toga se posvetio razvoju i promociji slobodnog softvera, financirajući Ubuntu kroz kompaniju Canonical.

Ubuntu je u žarištu uvijek imao korisnika i jednostavnost korištenja, po čemu se dosta razlikovao od ostalih distribucija u to vrijeme. Zbog toga i nije bio omiljena distribucija za hard core linuxaše.

Meni se taj pristup i cijela priča oko Ubuntu distribucije dopala na prvu i zato sam tada i napisao...

Čini mi se da Ubuntu nije još samo jedna od novih distribucija koje niču kao gljive poslije kiše. Ima sve preduvjete da postane jedan od glavnijih igrača na Linux sceni. Wolfwood's Crowd blog 2004.

U ovih 14 godina koristio sam i neke druge distribucije. openSUSE, Fedora, Arch, Manjaro, CrunchBang. Upoznavao se s novim igračima, Solus, Zorin, elementary OS. CentOS na poslužiteljima. Mint i ostale distribucije koje za osnovu uzimaju Ubuntu. Međutim kad odaberete svoje desktop okruženje sve je to isti Linux. I onda prevagnu detalji, stabilnost i redovitost. LTS inačica na kritična računalo, najnovija na sve ostalo.

Ubuntu je vrlo brzo postao dobro podržan od strane onih koji proizvode aplikacije koje se ne nalaze u repozitorijima ili čije najnovije inačice još nisu tamo. Personal Package Archives je odlična stvar. U posljednje vrijeme manje koristim PPA, a više Snap .

Ubuntu donosi novosti, ali ima i promašaja. Unity, desktop sučelje koje je napravljeno posebno za Ubuntu, nakon otpora, kontroverzi i prihvaćanja ipak je umirovljeno i sada je GNOME glavno sučelje. Ubuntu Touch je trebao biti mobilna inačica za pametne telefone i tablete, ali izgleda da je završio u slijepoj ulici. Usklađivanje (Convergence) mi je bila odlična ideja, možda zbog toga što sam i sam razmišljao o tome. Ono po čemu se Ubuntu razlikuje od drugih distribucija je upravo ta težnja i neustrašivost da se krene u nešto novo.

Istina, brod je sada u mirnijim vodama, ali ne bi me začudilo da se Ubuntu opet uputi tamo gdje druge distribucije još nisu bile, ili uopće ne pomišljaju na to. Pa nije se valjda Mark umorio ili mu je ponestalo ideja?

Internet je knjiškim moljcima omogućio lakši pristup poslasticama za koje se prije trebalo dosta potruditi. Dobri ljudi su skenirali stare knjige i učinili ih dostupnim i drugima. Klasični knjiški moljci se zgražaju digitalije i koriste samo papirnate knjige, ali ja sam pragmatičan, bolje digitalna knjiga na disku nego papirnata u dalekoj knjižnici. I pretraživanje je lakše, brže i bolje. Nemaju sve knjige indekse, a puno listanja uništava staru knjigu i njezin požutjeli papir. Nekada sam nabavljao dosta starih knjiga (ili njihov reprint) koje su me zanimale, ali sada tražim samo one koje ne mogu naći u digitalnom obliku.

Kad govorim o skidanju starih knjiga onda mislim na knjige kojima su autorska prava istekla i postale su javno dobro. Po važećem zakonu o autorskom pravu ta prava ističu 70 godina nakon smrti autora. Do 1999. taj rok je bio 50 godina pa se na autore kojima je prije toga isteklo pravo ne primjenjuje novi već stari rok. Više detalja na eLektire.skole.hr.

Glavni interes su mi knjige na hrvatskom jeziku i dijalektima te knjige koje se bave ovim prostorima (uglavnom na latinskom).

Na pitanje Koji bi web site odnio na pusti otok? (ili na Mars, da budemo u skladu s vremenom) odgovor bi bio Internet Archive. Ne samo da gore ima hrpa knjiga, tamo je i arhiva web stranica, filmova, tv vijesti, zvučnih zapisa (npr. Greatful Dead), programa za računala (npr. Internet Arcade) i još puno toga.

Internet arhiva ima preko 15 milijuna slobodno dostupnih knjiga i tekstova. Pretraživanje nije baš najbolje, pa je bolje

koristiti Google (unesete pojam za pretraživanje i site:archive.org kako bi

pretragu suzili samo na tu web stranicu). Ta arhiva mi je bila prvo mjesto na

kojem sam našao puno naših knjiga, i to u vrijeme kad javno dostupne

digitalizirane građe kod nas skoro da nije ni bilo. Arhiva vam omogućava i

stvaranje profila pa možete uvesti nekog reda u svoje čitanje i pretraživanje.

Ono što mi se najviše dopada kod te arhive je mogućnost skidanja knjiga u više različitih formata, od kojih je jedan i moj omiljeni DjVu format. Knjige možete čitati i online, njihov preglednik je jedan od najboljih, a ako želite knjigu možete ponuditi i posjetiteljima vaše web stranice koristeći embed kod.

Knjige su sakupljene iz različitih izvora i kvaliteta skeniranja nije uvijek najbolja. Neke stranice imaju premalu rezoluciju, a kod nekih vidite prste onih koji su okretali stranice. Na ostatke gableca još nisam naletio.

Moj mali prilog toj arhivi je kratki prikaz Progon vještica u Turopolju.

Google ima projekt u kojem želi napraviti katalog svjetskih knjiga, a one čija prava su istekla možete čitati i preuzeti. Ako želite pronaći nešto što je objavljeno u nekoj knjizi onda je Google Books odlično mjesto za početak pretrage.

Slobodno dostupne knjige možete čitati u pregledniku ili preuzeti u .pdf formatu. Za Google korisnik nije baš u prvom planu pa tu ima malih nebuloza pa su neke knjige dostupne za pregled, ali ih ne možete preuzeti jer sadržaj nije dostupan za posjetitelje iz Hrvatske. Hm, pokušao sam preuzeti par knjiga, ali sad mi tu poruku javlja za sve koje sam pronašao da imaju oznaku ebook - free. Nedavno nije bilo tako. Ne iznenađuje me to od Googlea, mi smo nevažni korisnici. Većina tih knjiga je skenirana na američkim sveučilištima. Kao sponzori su, između ostalih, navedeni Google, Microsoft, a iste knjige se mogu naći i na Internet Archive stranici.

Google Books uglavnom koristim za pretraživanje i rijetke slučajeve kad je neka knjiga samo tu dostupna.

Nekada su se digitaloljupci morali osloniti na američke knjižnice, dosta te građe je bilo dostupno samo njihovim korisnicima ili posjetiteljima iz SAD-a, nešto od toga je bilo dostupno i ostalima. Kako je dotok digitalne građe počeo i s naše strane već nekoliko godina ne koristim te servise pa nemam neku preporuku.

HathiTrust Digital Library ima veliku kolekciju, dobru tražilicu i dobar preglednik. Za skidanje vam treba partner login (na popisu su američka sveučilišta).

Europa se malo trgnula pa možete koristiti Europeana Collections za početak pretrage. Uz pomoć te stranice pronašao sam Munich DigitiZation Center koji ima dosta materijala. Lopašićeve "Hrvatske urbare" uspio sam naći samo tamo.

Najveća domaća zbirka je DiZbi.HAZU. Dostupno je skoro 1800 knjiga i preko 1300 rukopisa. Kvaliteta skenirane građe je odlična. Sučelje je malo nespretno, pretraživanje je zadovoljavajuće, ali kad tražite više pojmova onda shvatite da bi mogla biti ii puno bolja.

Preglednik je vrlo jednostavan, bez nekih naprednih mogućnosti prikazuje stranicu po stranicu.

Navigacija u pregledniku knjige je loše riješena, najproblematičnije je skakanje ne neku određenu stranicu. Veza na stranicu nije riješena jednostavno (broj stranice u adresi) već je veza riješena uz pomoć nekog hasha/koda, ne postoji mogućnost odabira točno određene stranice, pa morate biti spremni na puno listanja i klikanja.

Pratim tu zbirku dosta dugo, nekada je bilo moguće preuzimanje odabranih stranica, pa je to onda bilo onemogućeno, a sad vidim da je opet moguće preuzimanje (samo za knjige s isteklim autorskim pravima).

Tehničko rješenje je Ingigo platforma koja se koristi za skoro sve domaće projekte digitalizacije.

Zbirka Nacionalna i sveučilišne knjižnice u Zagrebu također koristi Indigo platformu. Ova zbirka se tek u zadnje vrijeme počela popunjavati i sadrži nešto preko 500 knjiga. Malo sam razočaran, s obzirom na dostupnost građe očekivao bi više sadržaja u ovoj zbirci, ali valjda će s vremenom ona sve više rasti.

Koristi noviju inačicu platforme, preglednik je moderniji s boljom navigacijom i pregledom po stranicama, ali ne nude preuzimanje knjiga.

Zbirka Knjižnica grada Zagreba koristi stariju inačicu Indigo platforme. Nema skidanja, jednostavan preglendik i 123 knjige.

FOI ima zanimljivu Metelwin digitalnu knjižnicu i čitaonicu. Osim starih knjiga ima i novih izdanja te arhiva časopisa.

Preglednik je dobar, omogućava jednostavnu navigaciju, ima dosta naprednih mogućnosti te mogućnost ugrađivanja na druge web stranice. Nisam pronašao mogućnost za preuzimanje knjige.

Nacionalna sveučilišna knjižnica u Zagrebu je digitalizirala dosta novina i časopisa koji su dostupni na stranicama Stare hrvatske novine i Stari hrvatski časopisi. Tražilice su dobre kad tražite jednostavne pojmove. Kvaliteta skeniranog materija je odlična, ali preglednik je spartanski (pregledava se slika po slika u prozoru koji iskače). Za listanje se koristi Microsoft Silverlight kojeg je i vrijeme zaboravilo.

Jučer je u Europskom parlamentu prihvaćen prijedlog direktive o autorskim pravima. I dok neki smatraju da se radi samo o zaštiti autora drugi misle da se radi o katastrofi i pozivaju na borbu.

Kao i u slučaju kolačića, gdje se zaštita privatnosti mogla riješiti na puno efikasniji i jeftiniji način, tako i sada oni koji su uključeni u direktivu jednostavno ne razumiju dosta toga.

Ovdje ću se osvrnuti na filtere koji bi trebali spriječiti korisnike da objavljuju dijelove ili cijela autorska djela. Pri tome ću se ograničiti samo na pisanu riječ. Za većinu korisnika će izrada takvog filtera biti nepremostiva teškoća i najvjerojatnije će, oni koji to moraju, koristiti neki servis treće strane. To će im stvoriti dodatne troškove, ali ni taj filter sigurno neće biti 100% točan. Najveći problem će najvjerojatnije biti u pogrešnim detekcijama.

Kad govorite o nekoj temi, recimo o nogometu, vrlo je teško biti potpuno originalan. Ako HNK Gorica pobijedi GNK Dinamo golom Dvornekovića u posljednoj minuti utakmice većina članaka će biti vrlo slična. Čak se i naslovi neće puno razlikovati. Zamislimo sad situaciju gdje svi domaći portali koriste isti filter. Prvi tekst koji dođe na provjeru će proći, ali ostali koji slijede neće zbog velike sličnosti teksta. I što će onda napraviti novinari? Morali budu promijeniti tekst da prođe filter. Naslov će morati slagati bolje od bilo kojeg driblera, iskoristiti riječi koje se rijetko koriste i poslagati ih u redoslijedu koji drugima neće pasti na pamet. S tekstom će biti isto tako. Za svaki gol morali budu smisliti originalni izraz za postići gol. Svaku reakciju igrača na terenu i trenera uz graničnu liniju morali budu opisati na jedinstven način. Možete li zamisliti kako će izgledati ti tekstovi? Meni je prvo pao na pamet onaj skeč iz Monty Pythona. Isto tako, samo s riječima.

Komentatori na portalima će biti na još većem iskušenju. Neki portali će krenuti linijom manjeg otpora pa će jednostavno izbaciti mogućnost korisničkih komentara. Isto kao što su neki američki portali blokirali EU korisnike. Jako će zaboravno biti kod onih koji će ostaviti komentare i uključiti filter. Hoće li komentari imati status autorskog djela i kakve će sve bravure izvoditi ovisnici o komentiranju? Možda bi bilo najbolje da izmisle neki svoj jezik.

Jezik? Da, to je još jedan dodatni problem. Hoće li filter morati prevoditi sadržaj na sve moguće svjetske jezike kako bi se provjerilo da netko nije napisao tekst ili komentar kojeg je samo preveo s nekog drugog jezika? I kakvu bi ogromnu bazu trebao imati taj filter? To je sigurno manji problem, veći je problem napraviti dovoljno brz i efikasan algoritam koji će u zadovoljavajućem vremenu vratiti odgovor. Trebalo bude izmisliti neki hash koji neće biti kao ovi standardni već će tekstovi koji su slični imati i jako slične hasheve.

Na kraju bi sve moglo završiti kao i s kolačićima. Svi će imati neke standardne poruke na koje će svi klikati bez da ih čitaju. Neki će imati filtere, ali će s njima imati više problema nego koristi. Direktivu će neki pokušati upotrijebiti protiv velikih igrača, ali će se mnogima od njih to razbiti o glavu. Neki će se naći i na sudu, manje iz opravdanih, a više iz trolerski razloga.

Hoće li autori imati kakve koristi od cijelog tog cirkusa? Ili će se i neki od njih morati braniti od optužbi? A možda im se dopadne ideja ministarstva smiješnog pisanja? Neki od njih već ni sada nisu daleko od toga.

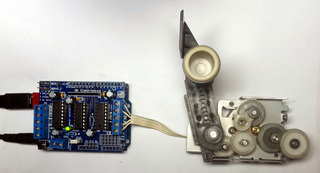

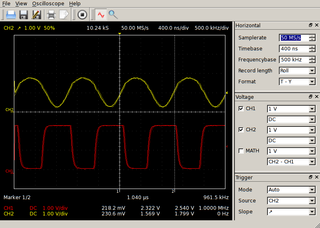

0:00:00.000,0:00:09.540 Hello. How are you? This is the participation part, come on 0:00:09.540,0:00:19.619 you're not the first I time here! OK, my name is Dobrica Pavlinušić and I will try to 0:00:19.619,0:00:25.019 persuade you today that you can do with your Linux something which you might not 0:00:25.019,0:00:32.969 have thought of by yourself I hope in a sense in last year and a half I noticed 0:00:32.969,0:00:38.610 that I am using microcontrollers for less and less and that I'm using my 0:00:38.610,0:00:44.760 Linux more and more for more or less the same tasks and in that progress process 0:00:44.760,0:00:51.239 I actually learned something which I want to share with you today in a sense 0:00:51.239,0:00:57.059 my idea is to tell you how to do something with your arm single board 0:00:57.059,0:01:05.729 computer in this lecture we will talk mostly about Allwinner boards but if 0:01:05.729,0:01:12.119 you want a hint if you want to buy some arm computer please buy the board 0:01:12.119,0:01:17.070 which is supported by armbian. armbian is the project which actually 0:01:17.070,0:01:21.360 maintains the distribution for our boards and is currently the best 0:01:21.360,0:01:27.689 distribution for arms aside from me raspbian for Raspberry Pi but raspbian 0:01:27.689,0:01:32.909 supports only Raspberry Pi but if you have any other board please take a look if 0:01:32.909,0:01:37.860 there is an armbian port. if there isn't try to contribute one and if you are 0:01:37.860,0:01:41.850 just deciding which board to buy my suggestion is buy the one which is 0:01:41.850,0:01:47.070 already supported on the other hand if you already did something similar you 0:01:47.070,0:01:52.079 might have found some references on the internet about device three and it 0:01:52.079,0:01:55.619 looked like magic so we'll try to dispel some of that 0:01:55.619,0:02:03.180 magic today unfortunately when you start playing with it one of the first things 0:02:03.180,0:02:10.459 you will want to do is recompile the kernel on your board so be prepared to 0:02:10.459,0:02:15.810 compile additional drivers if they are not already included. armbian again 0:02:15.810,0:02:21.890 wins because it comes with a lot of a lot of drivers already included and 0:02:21.890,0:02:28.950 this year I will not say anything which requires soldering which might be good 0:02:28.950,0:02:35.190 for you if you are afraid of the heat but it will be a little bit more than 0:02:35.190,0:02:41.519 just connecting few wires not much more for a start let's start with the warning 0:02:41.519,0:02:46.980 for example you have a arms in arms small arm board and you want to have a 0:02:46.980,0:02:52.019 real clock in it you know the one which keeps the time when the board is powered 0:02:52.019,0:02:57.239 off has the battery and so on if you buy the cheapest one from China which is 0:02:57.239,0:03:02.879 basically for Arduino you will buy the device which is 5 volt device which your 0:03:02.879,0:03:10.170 arm single board computer isn't. you can modify the board removing two resistors 0:03:10.170,0:03:16.409 if you want to but don't tell anyone I2C, and will mostly talk about i2c 0:03:16.409,0:03:23.819 sensors here, should be 5 volt tolerant so if you by mistake just connected and 0:03:23.819,0:03:30.569 your data signals are really 5 volt you won't burn your board but if you are 0:03:30.569,0:03:35.129 supplying your sensor with 5 volts please double-check that your that is 0:03:35.129,0:03:39.060 sure to connect it to your board and nothing bad will happen this is the only 0:03:39.060,0:03:44.970 warning I have for the whole lecture in this example I showed you a really 0:03:44.970,0:03:49.829 simple way in which you can take the sensors run i2cdetect, detect its 0:03:49.829,0:03:59.370 address in this case it's 68 - and then - load 1 kernel module and all of the 0:03:59.370,0:04:03.889 sudden your Raspberry Pi will have battery backed clock, just like your laptop does 0:04:03.889,0:04:12.569 but how did this journey all started for me? about two years ago I was very 0:04:12.569,0:04:18.060 unsatisfied with the choice of pinouts which you can download from the internet 0:04:18.060,0:04:23.820 I was thinking something along the lines wouldn't be it wouldn't it be nice if I 0:04:23.820,0:04:27.060 could print the pinout for any board I have 0:04:27.060,0:04:36.360 with perfect 2.54 millimeters pin spacing which I can put beside my pins 0:04:36.360,0:04:43.440 and never make a mistake of plugging the wire in the wrong pin and we all know 0:04:43.440,0:04:48.000 that plugging the wire in the wrong pin is always the first problem you have on 0:04:48.000,0:04:54.120 the other hand you say oh this is the great idea and you are looking at your 0:04:54.120,0:04:58.320 pin out which is from the top of the board and you are plugging the wires 0:04:58.320,0:05:02.160 from the bottom of the board and all of the sudden your pin out has to be 0:05:02.160,0:05:06.660 flipped but once you write a script which actually displays the pin out it's 0:05:06.660,0:05:12.950 trivially easy to get to add options to flip it horizontally or vertically and 0:05:12.950,0:05:18.870 create either black and white pin out if you are printing it a laser or color pin 0:05:18.870,0:05:26.639 out if you are printing it on some kind of inkjet so once you have that SVG 0:05:26.639,0:05:31.680 which you can print and cut with the scissors and so on it's just a script on 0:05:31.680,0:05:38.370 your machine so you could also have the common line output and then it went all 0:05:38.370,0:05:44.070 south I started adding additional data which you can see on this slide in 0:05:44.070,0:05:50.250 square brackets with the intention of having additional data for each pin for 0:05:50.250,0:05:56.250 example if I started SPI I want to see that this pin is already used so I will 0:05:56.250,0:06:01.830 not by mistake plug something into the SPI pins if I already have the SPI pin 0:06:01.830,0:06:08.150 started if I have the serial port on different boards your serial might be 0:06:08.150,0:06:14.880 UART4 on this particular CPU but it's the only serial in your Linux system so 0:06:14.880,0:06:21.270 it will be /dev/ttyS0 for example so I wanted to see all the data 0:06:21.270,0:06:27.180 and in the process I actually saw a lot of things which kernel know and I didn't 0:06:27.180,0:06:33.690 so today talking to you about it of course in comment line because you might 0:06:33.690,0:06:36.990 rotate your board well plug in the wires you can also do 0:06:36.990,0:06:45.540 all the flips and things you you already saw in the in the graphic part so let's 0:06:45.540,0:06:50.190 start with the sensor okay I said cheap sensor for eBay we'll get the cheap 0:06:50.190,0:06:54.840 sensors from eBay but this sensor is from some old PowerPC Macintosh. It was 0:06:54.840,0:06:59.580 attached to the disk drive and the Macintosh used it to measure the 0:06:59.580,0:07:04.890 temperature of the disk drive. you know that was in the times before the smart 0:07:04.890,0:07:12.060 had a temperature and I said hmm this is the old sensor, kernel surely 0:07:12.060,0:07:16.800 doesn't have support for it, but, oh look, just grep through the kernel 0:07:16.800,0:07:21.900 source and indeed there is a driver and this was a start I said hmm 0:07:21.900,0:07:29.580 driver in kernel, I don't have to use Arduino for it - now that I know that 0:07:29.580,0:07:35.070 driver is there and I have kernel module compiled, we said that prerequisite 0:07:35.070,0:07:40.950 is that we can compile the kernel, what do we actually have to program or do to 0:07:40.950,0:07:46.260 make this sensor alive? not more than this a echo in the middle of the slide 0:07:46.260,0:07:52.200 you just echo the name of the module and the i2c address the i2c addresses we saw 0:07:52.200,0:07:56.070 it before we can get it with i2cdetect by just connecting the sensor and 0:07:56.070,0:08:02.280 the new device will magically appear it will be shown in the sensors if you have 0:08:02.280,0:08:06.840 lm-sensors package installed but if you don't you can always find the same 0:08:06.840,0:08:14.160 data in /sys/ file system which is full of wonders and as we'll see in non 0:08:14.160,0:08:19.350 formatted way so the first two digits actually the last three digits digits 0:08:19.350,0:08:26.640 are the decimal numbers and the all the other are the the integer celsius in 0:08:26.640,0:08:34.470 this case. but you might say - I don't want to put that echo in my startup 0:08:34.470,0:08:40.380 script! or I would like to have that as soon as possible I don't want to depend 0:08:40.380,0:08:43.980 on the userland to actually start my sensor and believe 0:08:43.980,0:08:48.710 it or not because your smart phones have various sensors in 0:08:48.710,0:08:52.790 them, there is a solution in the Linux kernel for that and it's called the device tree 0:08:52.790,0:08:58.700 so this is probably the simplest form of the device tree which still doesn't look 0:08:58.700,0:09:06.020 scary, but it i will, stay with me, and it again defines our module the address 0:09:06.020,0:09:12.020 which is 49 in this case and i2c 1 interface just like we did in that echo 0:09:12.020,0:09:16.700 but in this case this module will be activated as soon as kernel starts up 0:09:16.700,0:09:24.590 as opposed to the end of your boot up process. one additional thing that kernel 0:09:24.590,0:09:31.000 has and many people do not use is ability to load those device trees 0:09:31.000,0:09:35.750 dynamically the reason why those most people don't use it is because they are 0:09:35.750,0:09:41.270 on to old kernels I think you have to have something along the lines of 4.8 0:09:41.270,0:09:47.510 4.8 or newer to actually have the ability to load the device trees live 0:09:47.510,0:09:54.470 basically you are you are using /sys/kernel/config directory and this script 0:09:54.470,0:10:00.620 just finds where you have it mounted and loads your device tree live word of 0:10:00.620,0:10:09.200 warning currently although it seems like you can do that on Raspberry Pi the API 0:10:09.200,0:10:14.270 is there the model is compiled, everything is nice and Diddley, kernel 0:10:14.270,0:10:18.350 even says that device tree overlay is applied, it doesn't work on the raspberry 0:10:18.350,0:10:24.950 pi because raspberry pi is different but if you gave a any other platform live 0:10:24.950,0:10:31.670 loading is actually quite nice and diddley. so now we have some sensor and it 0:10:31.670,0:10:37.310 works or it doesn't and we somewhat suspect that kernel 0:10:37.310,0:10:43.040 developers didn't write a good driver which is never ever the case if you 0:10:43.040,0:10:48.260 really want to implement some driver please look first at the kernel source 0:10:48.260,0:10:52.220 tree there probably is the implementation better than the one you 0:10:52.220,0:10:56.930 will write and you can use because the kernel is GPL you can use that 0:10:56.930,0:11:00.970 implementation as a reference because in my 0:11:00.970,0:11:05.890 small experience with those drivers in kernel they are really really nice but 0:11:05.890,0:11:10.960 what do you do well to debug it? I said no soldering and I didn't say but 0:11:10.960,0:11:15.010 it would be nice if I could do that without additional hardware. Oh, look 0:11:15.010,0:11:20.890 kernel has ability to debug my i2c devices and it's actually using tracing. 0:11:20.890,0:11:26.140 the same thing I'm using on my servers to get performance counters. isn't that 0:11:26.140,0:11:30.340 nice. I don't have to have a logic analyzer. I can just start tracing and 0:11:30.340,0:11:40.120 have do all the dumps in kernel. nice, unexpected, but nice! so let's get to the 0:11:40.120,0:11:45.790 first cheap board from China so you bought your arm single base computer 0:11:45.790,0:11:52.030 single board computer and you want to add few analog digital converters to it 0:11:52.030,0:11:57.070 because you are used to Arduino and you have some analog sensor or something and 0:11:57.070,0:12:05.620 you found the cheapest one on eBay and bought few four five six because they 0:12:05.620,0:12:10.990 are just the $ each, so what do you do the same thing we saw earlier you just 0:12:10.990,0:12:17.800 compile the models say the address of the interface and it will appear and 0:12:17.800,0:12:23.770 just like it did in the last example so everything is nice but but but you read 0:12:23.770,0:12:30.790 the datasheet of that sensor and the sensor other than 4 analog inputs also 0:12:30.790,0:12:38.860 has 1 analog output which is the top pin on the left denoted by AOUT, so you 0:12:38.860,0:12:42.850 want to use it oh this kernel model doesn't is not very 0:12:42.850,0:12:46.900 good it doesn't have ability to control that of course it does but how do you 0:12:46.900,0:12:50.680 find it? my suggestion is actually to search 0:12:50.680,0:12:57.190 through the /sys/ for either address of your i2c sensor, which is this 0:12:57.190,0:13:05.790 case is 48, or for the word output or input and you will actually get all 0:13:05.790,0:13:12.400 files, because in linux everything is a file, which are defined in driver of this 0:13:12.400,0:13:16.620 module, and if you look at it, there is actually out0_output 0:13:16.620,0:13:24.630 out0_output file in which you can turn output on or off so we are all 0:13:24.630,0:13:29.910 golden. kernel developers didn't forget to implement part of the driver for this 0:13:29.910,0:13:38.910 sensor all golden my original idea was to measure current consumptions of arm 0:13:38.910,0:13:44.490 boards because I'm annoyed by the random problems you can have just because your 0:13:44.490,0:13:49.470 power supply is not powerful enough so it's nice actually to monitor your power 0:13:49.470,0:13:55.740 usage so you will see what changes, for example, you surely... we'll get that, remind 0:13:55.740,0:14:01.250 me to tell you how much power does the the additional button take 0:14:01.250,0:14:05.130 that's actually interesting thing which you wouldn't know if you don't measure 0:14:05.130,0:14:10.950 current so you buy the cheapest possible eBay sensor for current, the right one 0:14:10.950,0:14:16.560 is the ina219 which is bi-directional current sensing so you 0:14:16.560,0:14:21.690 can put it between your battery and solar panel and you will see 0:14:21.690,0:14:26.160 whether the battery is charging or discharging, or if you need more channels 0:14:26.160,0:14:34.020 I like an ina3221 which has 3 channels, the same voltage 0:14:34.020,0:14:40.500 but 3 different channels, so you can power 3 arm single computers from one 0:14:40.500,0:14:47.670 sensor if you want to. and of course once you have that again the current is in 0:14:47.670,0:14:53.260 some file and it will be someting... 0:14:53.260,0:14:56.490 But, I promised you IOT? right? nothing 0:14:56.490,0:14:59.070 I said so far is IOT! where is the Internet? 0:14:59.070,0:15:05.100 where are the things? buzzwords are missing! OK, challenge 0:15:05.100,0:15:08.550 accepted! Let's make a button! you know it's like a 0:15:08.550,0:15:15.029 blink LED. So buttons, because I was not allowed to use soldering iron in 0:15:15.029,0:15:21.990 this talk, I'm using old buttons from old scanner. nothing special 3 buttons, in 0:15:21.990,0:15:25.350 this case with hardware debounce, but we don't care. 0:15:25.350,0:15:29.270 4 wires 3 buttons. how hard can it be? 0:15:29.270,0:15:36.240 well basically it can be really really simple this is the smallest 0:15:36.240,0:15:42.638 font so if you see how to read this I congratulate you! in this case I am 0:15:44.580,0:15:51.360 specifying that I want software pull up, in this first fragment on the top. 0:15:51.360,0:15:57.840 I could have put some resistors, but you said no soldering 0:15:57.840,0:16:03.540 so here I am telling to my processor. please do pull up on 0:16:03.540,0:16:09.540 those pins. and then I'm defining three keys. as you can see email. connect and 0:16:09.540,0:16:15.660 print. which generate real Linux keyboard events. so if you are in X and 0:16:15.660,0:16:20.730 press that key, it will generate that key, I thought it would it would be better to 0:16:20.730,0:16:26.400 generate you know the magic multimedia key bindings as opposed to A, B and C 0:16:26.400,0:16:31.940 because if I generated a ABC and was its console I would actually generate 0:16:31.940,0:16:39.140 letters on a login prompt which I didn't want so actually did it and it's quite 0:16:39.140,0:16:47.310 quite easy. In this case I'm using gpio-keys-polled which means that my CPU 0:16:47.310,0:16:52.500 is actually pulling every 100 milliseconds those keys to see whether 0:16:52.500,0:16:57.150 they their status changed and since the board is actually connected through the 0:16:57.150,0:17:01.890 current sensing - sensor I mentioned earlier I'm 0:17:01.890,0:17:11.209 getting additional: how many mA? every 100 milliseconds, pulling 3 keys? 0:17:13.010,0:17:19.350 60! I wouldn't expect my power consumption to rise by 60 milliamps 0:17:19.350,0:17:24.480 because I am pulling 3 keys every 100 milliseconds! but it did and because I 0:17:24.480,0:17:32.430 could add sensors to the linux without programming drivers, I know that! why am I 0:17:32.430,0:17:40.450 using polling because on allwinner all pins are not interrupt capable so in 0:17:40.450,0:17:44.520 the sense all pins cannot generate interrupts on allwinner 0:17:44.520,0:17:48.940 raspberry pi in this case is different on raspberry pi every pin can be 0:17:48.940,0:17:54.279 interrupt pin on Allwinner that is not the case. so the next logical question is 0:17:54.279,0:17:58.510 how do I know when I'm sitting in front of my board whether the pin can get the 0:17:58.510,0:18:05.440 interrupt or not? my suggestion is ask the kernel. just grap through the debug 0:18:05.440,0:18:10.720 interface of the kernel through pinctrl which is basically the the thing 0:18:10.720,0:18:19.570 which configures the pins on your arm CPU and try to find the irq 0:18:19.570,0:18:25.840 will surely get the list of the pins which are which are irq capable take in 0:18:25.840,0:18:30.370 mind that this will be different on different arm architectures so 0:18:30.370,0:18:34.419 unfortunately on allwinner it will always look the same because it 0:18:34.419,0:18:41.020 is allwinner architecture. actually sunxi ! but on the Raspberry Pi for 0:18:41.020,0:18:45.880 example this will be somewhat different but the kernel knows, and the grep is 0:18:45.880,0:18:51.490 your friend. so you wrote the device three you load it either live or some 0:18:51.490,0:18:57.279 something on some other way you connect your buttons and now let's try does it really 0:18:57.279,0:19:03.580 work? of course it does! we will start evtest which will show us all the 0:19:03.580,0:19:08.350 input devices we have the new one is the gpio-3-buttons, which is the same name 0:19:08.350,0:19:14.559 as our device three overlay and we can see the same things we saw in 0:19:14.559,0:19:20.230 device three we defined three keys with this event but we free-of-charge 0:19:20.230,0:19:25.990 got for example keyboard repeat because this is actually meant to be used for 0:19:25.990,0:19:31.630 keyboards our kernel is automatically implementing repeat key we can turn it 0:19:31.630,0:19:35.919 off but this is example of one of the features which you probably wouldn't 0:19:35.919,0:19:43.330 implement yourself if you are connecting those three keys to your Arduino but but 0:19:43.330,0:19:46.120 but this is still not the internet-of-things 0:19:46.120,0:19:49.789 if this is the Internet it should have some kind of 0:19:49.789,0:19:56.299 Internet in it some buzzwords for example mqtt and it really can just 0:19:56.299,0:20:01.759 install trigger-happy demon which is nice deamon which listens to 0:20:01.759,0:20:06.830 input events and write a free file configuration which will send the each 0:20:06.830,0:20:15.139 key pressed order MQTT and job done i did the internet button without a line 0:20:15.139,0:20:23.419 of code the configuration one side note here if you are designing some some 0:20:23.419,0:20:30.919 Internet of Things thingy which even if you are only one who will use it it's a 0:20:30.919,0:20:33.889 good idea but if you are doing it for somebody else 0:20:33.889,0:20:41.119 please don't depend on the cloud because I wouldn't like for my door to be locked 0:20:41.119,0:20:45.590 permanently with me outside just because my internet connection isn't working 0:20:45.590,0:20:52.999 think about it of course you can use any buttons in this case this was the first 0:20:52.999,0:20:57.070 try actually like the three buttons better than this one that's why this 0:20:57.070,0:21:02.419 these buttons are coming second and this board with the buttons has one 0:21:02.419,0:21:08.840 additional nice thing and that is the LED we said that will cover buttons and 0:21:08.840,0:21:15.379 LEDs right unfortunately this LED is 5 volts so it won't light up on 3.3 volts 0:21:15.379,0:21:24.139 but when you mentioned LEDs something came to mind how can I use those LEDs 0:21:24.139,0:21:29.029 for something more useful than just blinking them on or off we'll see you 0:21:29.029,0:21:34.039 later then turning them on or off it is also useful you probably didn't know 0:21:34.039,0:21:40.580 that you can use Linux triggers to actually display status of your MMC card 0:21:40.580,0:21:47.720 CPU load network traffic or something else on the LEDs itself either the LEDs 0:21:47.720,0:21:51.859 which you already have on the board but if your manufacturer didn't provide 0:21:51.859,0:21:56.679 enough of them you can always just add random LEDs, write device tree and 0:21:56.679,0:22:01.559 define the trigger for them, and this is an example of that 0:22:01.559,0:22:08.219 and this example is actually for this board which is from the ThinkPad 0:22:08.219,0:22:14.019 ThinkPad dock to be exact, which unfortunately isn't at all visible 0:22:14.019,0:22:20.169 on this picture, but you will believe me, has actually 2 LEDs and these 3 0:22:20.169,0:22:27.489 keys and 2 LEDs actually made the arm board which doesn't have any buttons on 0:22:27.489,0:22:36.609 it or status LEDs somewhat flashy with buttons. that's always useful on the 0:22:36.609,0:22:41.889 other hand here I would just want to share a few hints with you for a start 0:22:41.889,0:22:47.320 first numerate your pins because if you compared the the picture down there 0:22:47.320,0:22:54.579 which has seven wires and are numerated which is the second try be the first 0:22:54.579,0:23:00.579 nodes on the up you will see that in this case i thought that there was eight 0:23:00.579,0:23:06.999 wires so the deducing what is connected where when you have the wrong number of 0:23:06.999,0:23:14.229 wires is maybe not the good first step on the other hand we saw the keys what 0:23:14.229,0:23:21.639 about rotary encoders? for years I was trying to somehow persuade Raspberry Pi 0:23:21.639,0:23:28.149 1 as the lowest common denominator of all arm boards you know cheap slow and 0:23:28.149,0:23:33.789 so on to actually work with this exact rotary encoder the cheapest one from the 0:23:33.789,0:23:41.589 Aliexpress of course see the pattern I tried Python I try the attaching 0:23:41.589,0:23:48.579 interrupt into Python I tried C code and nothing worked at least didn't work 0:23:48.579,0:23:56.859 worked reliably and if you just write the small device tree say the correct 0:23:56.859,0:24:01.809 number of steps you have at your rotary encoder because by default it's 24 but 0:24:01.809,0:24:08.589 this particular one is 20 you will get perfect input device for your Linux with 0:24:08.589,0:24:11.579 just a few wires 0:24:12.390,0:24:20.260 amazing so we saw the buttons we saw the LEDs we have everything for IOT except 0:24:20.260,0:24:25.840 the relay so you saw on one of the previous pictures this relay box it's 0:24:25.840,0:24:31.980 basically four relays separated by optocouplers which is nice and my 0:24:31.980,0:24:39.670 suggestion since you can't in device three you can't say this pin will be 0:24:39.670,0:24:45.790 output but I want to initially drive it high or I want to initially drive it low 0:24:45.790,0:24:52.330 it seems like you can say that it's documented in documentation it's just 0:24:52.330,0:24:56.440 not implemented there on every arm architecture so you can write it in 0:24:56.440,0:25:02.440 device three but your device tree will ignore it. you but you can and this might be 0:25:02.440,0:25:07.240 somewhat important because for example this relay is actually powering all your 0:25:07.240,0:25:11.220 other boards and you don't want to reboot them just because you reboot the 0:25:11.220,0:25:16.270 machine which is actually driving the relay so you want to control that pin as 0:25:16.270,0:25:24.310 soon as possible so my suggestion is actually to explain to Linux kernel that 0:25:24.310,0:25:30.310 this relays is actually 4 LEDs which is somewhat true because the relay has the 0:25:30.310,0:25:36.460 LEDs on it and then use LEDs which do have the default state which works to 0:25:36.460,0:25:41.410 actually drive it as soon as possible as the kernel boot because kernel will boot 0:25:41.410,0:25:45.640 it will change the state of those pins from input to output and set them 0:25:45.640,0:25:52.000 immediately to correct value so you hopefully want want power cycle your 0:25:52.000,0:25:56.410 other boards, and then you can use the LEDs as you would normally use them in 0:25:56.410,0:26:01.420 any other way if LEDs are interesting to you have in 0:26:01.420,0:26:06.340 mind that on your on each of your computers you have at least two LEDs but 0:26:06.340,0:26:10.810 this caps lock and one is non lock on your keyboard and you can use those same 0:26:10.810,0:26:15.190 triggers I mentioned earlier on your existing Linux machine using those 0:26:15.190,0:26:20.980 triggers so for example your caps lock LED can blink as your network traffic 0:26:20.980,0:26:29.380 does something on your network really it's fun on the other hand if you have a 0:26:29.380,0:26:36.370 Raspberry Pi and you defined everything correctly you might hit into some kind 0:26:36.370,0:26:42.370 of problems that particular chip has default pull ups which you can't turn on 0:26:42.370,0:26:49.059 for some pins which are actually designed to be clocks of this kind or 0:26:49.059,0:26:54.220 another so even if you are not using that pin as the SPI clock whatever you 0:26:54.220,0:26:59.110 do in your device tree you won't be able to turn off, actually you will turn 0:26:59.110,0:27:04.240 off the the setting in the chip to for the pull up but the pull up will be 0:27:04.240,0:27:08.140 still there actually thought that there is a hardware resistor on board but 0:27:08.140,0:27:14.980 there isn't it's inside the chip just word of warning so if anything I would 0:27:14.980,0:27:21.309 like to push you towards using Linux kernel for the sensors which you might 0:27:21.309,0:27:25.570 not think of as the first choice if you just want to add some kind of simple 0:27:25.570,0:27:32.080 sensor to to your Linux instead of Arduino over serial port which I did and 0:27:32.080,0:27:38.049 this is the solution which might with much less moving parts or wiring pie and 0:27:38.049,0:27:43.090 in the end once you make your own shield for us but if I you will have to write 0:27:43.090,0:27:49.480 that device tree into the EEPROM anyway so it's good to start learning now so I 0:27:49.480,0:27:53.890 hope that this was at least useful very interesting and if you have any 0:27:53.890,0:28:02.140 additional questions I will be glad to to answer them and if you want to see 0:28:02.140,0:28:07.780 one of those all winner board which can be used with my software to show the pin 0:28:07.780,0:28:12.460 out here is one board which kost actually borrowed me yesterday and in which 0:28:12.460,0:28:18.549 yesterday evening I actually ported the my software which is basically just 0:28:18.549,0:28:22.330 writing the definition of those pins over here and the pins on the header 0:28:22.330,0:28:26.950 which I basically copy pasted from the excel sheet just to show that you can 0:28:26.950,0:28:32.830 actually do that for any board you have with just really pin out in textual file 0:28:32.830,0:28:36.370 it's really that simple here are some additional 0:28:36.370,0:28:38.300 links and do you have any questions? 0:28:39.420,0:28:41.420 [one?]

Neće.

Niste baš uvjereni? CEO LinkedIn-a kaže da će do 2020. više od 5 milijuna poslova biti izgubljeno zbog novih tehnologija. S druge strane Gartner predviđa da će 1,8 milijuna poslova biti izgubljeno, ali da će umjesto njih biti kreirano 2,3 milijuna novih poslova. U firmi u kojoj radim trećina ljudi radi na poslovima koji nisu postojali prije nekoliko godina. Ono što je sigurno je da će neki ljudi zbog novih tehnologija izgubiti posao, ali će najvjerojatnije brzo naći drugi. Roboti nas neće ostaviti bez posla, samo će nas natjerati da radimo poslove koje oni ne mogu obavljati.

Godine 1900. u SAD-u je 70% radnika radilo u poljoprivredi, rudarstvu, građevini i proizvodnji. Zamislite da im je došao vremenski putnik s informacijom da će za 100 godina samo 14% radnika raditi u tim djelatnostima i da ih je pitao čime će se baviti 56% ostalih radnika?! Siguran sam da ne bi znali odgovor, kao što ni mi ne znamo odgovor na pitanje što će raditi vozači kad ih zamijene samovozeća vozila.

Neki od njih će postati treneri umjetne inteligencije. Već i danas ti poslovi postoje, strojevi uče uz pomoć ljudi, neki novinari nazivaju to prljavom tajnom umjetne inteligencije. Budućnost će donijeti još više različitih poslova u toj djelatnosti.

Nekad se dogodi da su posljedice uvođenja novih tehnologija suprotne od očekivanja. Sjetite se samo predviđanja o uredima bez papira. Na kako je tehnologija omogućila jednostavniji ispis dobili smo urede koji proizvode prokleto puno papira. Moguće je da takav efekt papira u nekoj varijaciji pogodi i industriju umjetne inteligencije. Posla će biti, samo budite spremni na stalne promjene.

Volim slatka vina. Voćne arome, diskretno slatki alkohol. Život je ionako od previše gorkih okusa da bi se odrekli slatkog.

Jedno od najboljih slatkih vina koje sam pio je traminac vinogradara i vinara Mihajla Gerštmajera . Njegova vina nećete naći na policama dućana, možete ih kupiti u njegovoj vinariji i stvarno se radi o vrhunskim vinima po povoljnim cijenama.

Dugo nakon toga pokušavao sam naći neki jednako dobar traminac na policama naših dućana, ali bez uspjeha.

Muškat sam izbjegavao dok nisam slučajno u dućanu uzeo "Muškat žuti" obitelji Prodan. Nasjeo sam na akcijsku prodaju. Vau. Odličnog okusa, baš po volji mojim nepcima. Polica u dućanu je opustošena na tom mjestu gdje je bio taj muškat. Za probu sam uzeo jedan drugi žuti muškat. Dobar je, ali ne kao od Prodana.

Pop pjesme su kao slatka vina. Tu također imamo odličnih stvari ali i puno, puno više slatkih vodica od kojih boli glava. Zaboravimo sad glavoboljčeke, jedan od odličnih izbora je Live@tvornica Kulture Vlade Divljana i Ljetnog Kina.

Možda ne priliči da sad u crtici o vinima spominjem i pivo, ali zadnju godinu-dvije sporadično sam koristio aplikaciju Untappd kako bi pratio (i pamtio) uživanja u pivi. Sad me više interesiraju vina pa sam se sjetio potražiti odgovarajuću aplikaciju. Vivino izgleda dosta dobro, čak i naša imaju dosta ocjena a ima i preko tisuću hrvatskih korisnika s profilima.

P.S. Ovo je povratak blogu nakon duže pauze. Možda ću vas iznenaditi s nekim novim temama, moglo bi biti manje IT-a, a više nekih drugih stvari zbog kojih me svrbe prsti. Do čitanja. :-)

Mogli bi napraviti istraživanje, analizirati tekstove članaka prvih 100 domaćih portala, ali ono bi nam sigurno otkrilo da je najčešći tekst koji se pojavljuje u poveznicama riječ ovdje.

Smatraju li novinari i urednici da je prosječni posjetitelj njihovih stranica toliko neuk da ne bi znao prepoznati poveznicu i kliknuti na nju ako ne piše ovdje? Ili je to sindrom lonca, predaja govori da se to tako radi, a i ponekad je prevelika gnjavaža smisliti pravi tekst za poveznicu.

Možda su za to krivi i SEO stručnjaci? Kad je prvi novinar napisao tekst za poveznicu, onako kako bi to trebalo raditi, skočio je SEO stručnjak, lupio ga štapom po prstima i rekao da ne smije tako olako prosipati link đus. To je osobito važno ako se uzme tekst od konkurentskog portala. Pa nećemo valjda njima povećavati značaj, briši to, piši ovdje. Pravilo je postavljeno, svi ga se drže, kao u eksperimentu s majmunima i bananama.

Legenda kaže da Google (a kad govorimo o optimizaciji za tražilice onda i ne gledamo druge) na temelju teksta poveznice radi link profil. Pa ako sadržaj stranice optimizirate za neku riječ, i svi linkovi imaju taj tekst, da će vas Google penalizirati. Baš me zanima što Google misli o riječi iz naslova. Ne smijem je puno puta spominjati u tekstu jer će misliti da se pokušavam dobro pozicionirati za nju ;-).

Znam da meni ne vjerujete, jer nitko nije prorok u vlastitom selu, a i nisam neki SEO stručnjak, pa budem naveo nekoliko članaka o pravilnim poveznicama na koje sam naišao brzim guglanjem. To je dobar dokaz da znaju pisati poveznice jer ne bi bili među prvih par rezultata, zar ne?

Anchor Text Best Practices For Google & Recent Observations je napisao SEO stručnjak Shaun Anderson i u tekstu jasno govori "Don’t Use ‘Click Here’". Zanimljiva su i njegova opažanja o optimalnoj dužini tog teksta.

I čuveni Moz spominje Anchor Text pa kaže "SEO-friendly anchor text is succinct and relevant to the target page (i.e., the page it's linking to)."

Pravilni opis poveznice je sama suština weba kao takvog. On je svojevrsni ekvivalent fusnote u štampanom tekstu. Zamislite da čitate tekst u kojem se, umjesto riječi na koje se napomene odnose, svako malo pojavi riječ ovdje. To bi bilo malo naporno za čitanje.

Optimizirajte svoje tekstove za čitanje, za korisnike. Poveznice bi se trebale izgledom razlikovati od ostalog teksta (to se definira CSS stilom za vašu web stranicu) te kratko i jasno opisati sadržaj na koji vode. I to je sve što bi trebali znati za uspješno sudjelovanje u kampanji iskorijenimo ovdje.

Predizborno je vrijeme. To znači da će gradonačelnici i načelnici širom zemlje predstavljati različite projekte kojima žele pokazati i dokazati kako zaslužuju još jedan mandat. Neki od njih žele pokazati da idu u korak s vremenom pa slijede neke svjetske trendove u lokalnoj upravi. Čak se promoviraju i na informatičkim konferencijama.

Tako smo doznali da je Virovitica postala pametan grad, službeno je pokrenut portal otvorenih podataka te portal za prijavljivanje komunalnih nepravilnosti.

Portal MyCity još ne možemo vidjeti jer nas tamo dočekuje samo IIS default stranica. Portal otvorenih podataka je funkcionalan i na njemu imamo, slovima i brojkama, 6 (šest) skupova podataka. Baš me zanima kako će tih 6 Excel datoteka pomoći rastu digitalne ekonomije i koliko će to Grad Virovitica platiti?! U priopćenjima nema tog podatka.

Bilo je potpuno nepotrebno da Grad Virovitica pokreće taj projekt jer već postoji Portal otvorenih podataka Republike Hrvatske na kojem su mogli postaviti tih svojih 6 datoteka. Tu mogućnost iskoristili su gradovi Rijeka, Zagreb i Pula koji su zajedno objavili 123 skupa podataka. Da, ali s čime bi se onda gradonačelnik Kirin hvalio?

Najavljuje se da su Velika Gorica i Varaždin u pilot fazi ovakvog projekta. To valjda znači da se ne mogu odlučiti kojih 6 Excel tablica će uploadati. Službenici u tim gradovima su sigurno pod velikim pritiskom da to naprave prije lokalnih izbora.

Jutros sam opet dobio jedan od tuđih mailova. E-mail adresa je moja, ali sadržaj nije namijenjen meni. Osoba se registrirala, navela moju e-mail adresu kao svoju, naručila neke stvari, dobila potvrdu narudžbe. Web trgovina koju je osoba koristila ne provjerava e-mail adrese s kojima se korisnici prijavljuju.

To je nešto najosnovnije, prije bilo kakve radnje trebali bi provjeriti e-mail adresu (uobičajeno je slanje tokena/kontrolnog koda na tu adresu). Ako se radi o servisu gdje trošite novce, a nisu implementirali taj osnovni prvi korak, kako im možete vjerovati da će brojevi vaših kartica ili neki drugi povjerljivi podaci biti sigurno obrađeni i sačuvani? Bolje je da ne koristite takve servise.

U najgorem slučaju može se dogoditi da greškom navedete e-mail osobe koja će zloupotrijebiti priliku. Registrirate se u nekom web dućanu gdje ste ostavili broj svoje kreditne kartice, sretni ste jer ima 1-Click-to-buy mogućnost i naveli ste pogrešan e-mail. Zločesti sretnik će postaviti novu zaporku (jer kao vlasnik navedene e-mail adrese to može napraviti), promijeniti adresu dostave i veselo kupovati. Vama će biti jasno što se dogodilo najvjerojatnije tek kad dobijete račun za svoju kreditnu karticu.

Zadnjih par godina primao sam svakojake poruke. Od računa, predračuna i ponuda do raznih zapisnika, seminara pa sve do ljubavnih poruka punih glupavih pjesmica i YouTube linkova na cajke. Kad sam dobre volje vratim poruku pošiljatelju i upozorim da pogrešnu adresu, a kad nisam onda poruke šaljem u smeće.

Jedini slučaj kad svaki put nastojim razriješiti zabunu su nalazi koje bolnice šalju bolesnicima ili obavijesti o zakazanom terminu pregleda. Ono što me užasava je da za tako ozbiljne stvari naš zdravstveni sustav koristi tako nepouzdane metode. Nekome pitanje života može ovisiti o pogrešno poslanoj poruci. Čemu nam služi sustav e-Građani ako ga ne koriste za takve namjene?

Među širokim narodnim masama je stvorena fama o hackerima koji uz pomoć računala provaljuju u tuđa računala, kradu novac, identitete korisnika. U praksi to uglavnom nije tako jer najlakši način za takve nestašluke napasti najslabiju kariku: ljude. Kevin Mitnick slavu je stekao socijalnim inženjeringom što je učeniji naziv za prevaru ljudi. Lakše je prevariti ljude nego provaliti na neki poslužitelj.

Prije par mjeseci odlučio sam kupiti kino ulaznice online. Kako rijetko kupujem ulaznice online nije me iznenadilo da mi spremljena zaporka (u tu svrhu koristim KeePassX) nije radila. Pripisao sam to vlastitom nemaru, kod manje važnih servisa zaboravim unijeti promjenu zaporke u KeePassX. Prošao sam proceduru za zaboravljenu zaporku, ponovno se prijavio, odabrao vrijeme i mjesta u dvorani i došao do trenutka prije plaćanja. Odjednom mi se učinilo da nešto nije u redu. Provjerim podatke i vidim svoje ime, ali drugo prezime. Pogledam predstave koje sam gledao i vidim par filmova za koje sam siguran da nisam gledao prije par mjeseci. Netko je kupovao karte koristeći moj korisnički račun. Nisam koristio mogućnost pamćenja broja moje kartice za bržu kupnju pa nije bilo nikakve štete (provjerio sam i izvode za moju karticu), preuzimatelj nije kupovao karte mojim novcima niti je on ostavio broj svoje kartice.

Nakon nekoliko poruka sa službom za korisnike uspio sam otkriti kako je došlo do sporne situacije. Upozorio sam ih da imaju sigurnosni problem. Nitko nije provalio na njihov poslužitelj, nisu im iscurili podaci, ali imali su grešku u proceduri postupanja i sve se svelo na ljudsku grešku. Uz postojeću online prijavu imaju mogućnost da korisnik ispuni obrazac i odmah dobije pristupne podatke. Preuzimatelj mojeg računa je to i napravio, ispunio je obrazac, ali umjesto svoje naveo je moju elektroničku adresu. I tu ulazi u igru ljudski nemar ili neznanje - operator je po e-mail adresi našao moj korisnički račun, postavio nove pristupne podatke i dao iz preuzimatelju. Bez ikakve provjere navedene e-mail adrese.

Sad vam je jasno kako je lako preuzeti nečiji korisnički račun kod tog prikazivača?! Dovoljno je da znate e-mail adresu postojećeg korisnika i predate ručno ispunjeni obrazac. Ako je taj odabrao mogućnost brze kupnje ulaznica možete besplatno uživati u kino predstavama. Dok vas ne uhvate.

Službi za korisnike pokušao sam dokazati kako imaju veliki problem, ali oni s kojima sam komunicirao ili nisu razumjeli ili nisu željeli priznati da imaju problem. Čak mi je rečeno i da je nemoguće da se to dogodi, ali eto dogodilo se meni. :-)

Hackeri u pravilu ne provaljuju u računala i uvreda je tim imenom nazivati one koji su vjerni izvornoj ideji hakerstva. Kriminalce (nazovimo ih pravim imenom) obično zovu crackerima ili black hat hackerima.

Provjeravanje e-mail adresa korisnika je prvi i logični korak. Druga mogućnost je da za identifikaciju korisnika koristite odgovarajući pouzdani servis. Državne i javne ustanove u Hrvatskoj bi za to mogle koristiti NIAS.

Osnovni problem kod NIAS-a je i taj što su nepotrebno omogućili cijeli niz izdavatelja vjerodajnica treće strane i na taj način unijeli dodatne komplikacije i povećali sigurnosni rizik. Ali to je posebna tema...

Dispelling one particular critique of UBI

Universal Basic Income (UBI) has started appearing with increasing regularity in research and experiments all around the world (Finland, India, …Oakland?). Of course, the scheme has both benefits and drawbacks, its proponents and critics, but in the absence of experience from a large-scale long-running UBI program, it is hard to evaluate what would actually happen.

One popular critique is that giving everyone some amount of money would simply raise the floor for prices. Inflation would do the rest and the scheme would quickly cancel itself out.

This critique is actually easy to dispel, and I believe it’s useful to do so, so we can focus on other actual challenges (of which are many). Here we go:

If everyone gets a fixed sum of extra cash, what’s to stop merchants from raising prices? Other merchants. Consider bakeries. They could raise prices, knowing everyone has extra money to pay for bread. However, all it takes is one savvy baker to recognize he or she could raise the prices just a little lower than everyone else, enough for people to start preferring their shop instead of the competition. The baker could increase their market share significantly (open a chain of “cheap” bakeries). But the competition would quickly catch up to the rascal’s plan and lower their prices accordingly. The baker could lower them still a bit more, and so on…

Where would that downward pressure stop? At the point at which there is no point in running the bakery (the profit is too small). Which is the exact same price point as before[0], and doesn’t depend on the purchasing power of the consumers (that is, it’s not tied to how much money people have).

However, in this description I’ve made two important assumptions: that there is enough competition between bakers, and that bread production can be ramped up and down as demand increases. If either of these assumptions is false, the picture becomes less rosy.

Start with competition. If there is only one baker (a monopoly), he or she is always in the position to charge as much as they like (which is typically just below the point at which many people would stop eating bread and switch to something else, say, rice). In this case, introducing UBI would directly lead to price increase, unless the price itself was regulated (as is the case with utilities, which are natural monopolies).

The other assumption is that the production can adapt to the demand. When this is not the case, that is, when the supply is limited, the competition between consumers for a limited number of products, will almost certainly gobble up any extra money people receive. Spectacular example of this is the housing market in Silicon Valley, where an ever increasing number of IT workers with sky-high salaries competes for a very limited amount of housing.

An even better example are tuitions for prestige universities in the US. Since it is not in the universities’ interest to increase number of students, increasing the money supply for the prospective students with student loans meant that students were now able to pay more for the same thing and that universities could simply increase the tuition fee[1]. Increasing the money supply to students via UBI would have the same effect.

Coming back to validity of the critique that UBI would simply result in price increases, we can see that it rests on the question of whether people spend more money for commodity products, or on limited-supply products or monopolies.

The recent stats from US Bureau of Labor Statistics[2] show that roughly a third of the expenses are housing related. To me, this shows that those in very skewed housing markets (like Silicon Valley, New York, or London) might see price increases due to UBI, but for the most people (that live in healthier housing markets) the housing cost shouldn’t be affected. Other costs are related to more commoditized goods and services so they should be even less affected.

This doesn’t mean that Universal Basic Income is definitely a net benefit for society. There are many other issues to examine, challenges to be sorted out, and the jury will be out on its effects for a long time.

But at least we’ve got one out of the way.

[0] Actually, it could be even lower. If UBI replaces minimum wage, workers may decide they’re willing to work for a little less, thereby reducing the cost of bread. I’m not an economist, statistician, or a social scientist so I will not venture into discussion on whether that’d be a good thing overall.

[1] That’s not to say student loans weren’t beneficial overall. It may very well be that the system allowed more students to attend the universities as not all schools’ prices hiked (and not nearly by the same amount as the top ones), and allowed more middle-class and poorer students the opportunity. I know too little about the matter to draw any conclusions either way.

[2] I imagine stats for other western countries would show qualitatively similar amounts.

Voice-controlled AI assistants are advanced enough to be dangerous

Useful voice recognition, combined with AI capable of parsing specific phrases and sentences, is finally here. Amazon’s Alexa, Apple’s Siri and Google’s Assistant are showing us what the future will be like.

However, the safeguards are lagging behind the capabilities, as the recent example of a TV anchor ordering dollhouses shows. The fact that the system picked up voice from the TV and interpreted it as a command sounds funny, but should be terrifying to anyone remotely interested in computer security. It sounds like a Hollywood adaptation of the classic remote code execution bug — but it’s not a fantasy any more.

We’re so happy that we have machines that can listen to us, that in our rush to use / buy / create them, we haven’t stopped and made sure they listen only to us. That’s why a kid can order a dollhouse while parents are asleep or away, TV anchor reporting on that can order hundreds more, and we can play fun pranks when visiting friends by ordering tons of toilet paper while they’re not looking :–)

Accidentally ordering something online can be terribly inconvenient and cost you a fine buck, but as these assistants get control over more devices in our homes and our lives (IoT anyone?), we’ll start seeing real problems. Here’s a stupid trick that might just work in a year of so: Alexa, unlock the front door!

Mobile phone voice assistants show one way of handling this: by requiring the phone to be unlocked for (most) commands to work. Yet while may make sense for phones (and only slightly inconvenience the user), it’s a non-starter for home automation systems. If I have to walk over and press a button, I might just as well do the entire action (such as turning the light off, or unlocking the door) myself.

Another possibility is speaker recognition. By analyzing how the words are uttered, not just what they are, such systems can distinguish voice of the authorized user. However, like many other biometric systems, it is easily fooled by a facsimile of the user — in this case, a simple recording. Thus anyone with a mobile phone can “hack” this kind of security.

More effective, and only slightly more inconvenient, would be the combination of requiring the physical presence of the user in the room (for example, by sensing their mobile phone, smartwatch, or other personal item they’d carry around most of the time) and speaker recognition. In this case, even if a hack is attempted, the user themselves would be around to prevent it.

So the good news is, it shouldn’t be that hard to build more secure voice-controlled systems. The bad news is, as we’ve seen with huge botnets made of compromised IoT devices, many companies in home automation space currently have no experience or incentives to focus more on security.

Voice-controlled AI assistants are here to stay, and it’s a good thing — they’re mightily convenient. But expect more fun anecdotes and scary stories in the years ahead.